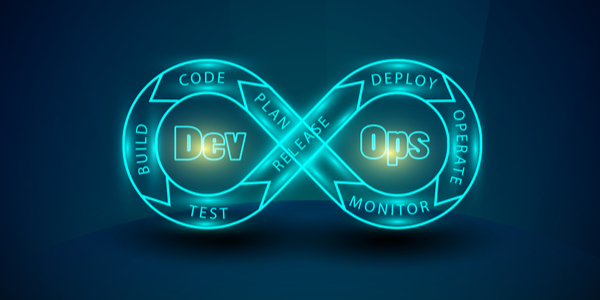

DevOps, as we all know, is the practice of joining together development and operations to streamline processes and increase application deployment agility, flexibility and maintenance efficiency.

DevOps, as we all know, is the practice of joining together development and operations to streamline processes and increase application deployment agility, flexibility and maintenance efficiency.

Since its origins in the software development landscape about 10 years ago DevOps has evolved as the cloud has evolved and come into prominence. With so many businesses actively going through digital transformation to migrate their applications to cloud resources, it’s clear that DevOps will continue to play a huge role.

But given that companies are often at varying stages of cloud-readiness – often keen to rely on a hybrid fusion of on-premise, public cloud resources – the DevOps automation challenge suddenly becomes a lot tougher than it would first appear.

From Racks To Containers – Via Cloud

If we go right back to basics for a second; a server is the basic resource framework needed to run software. In the old days (back in the IT Dark Ages of some fifteen or so years ago), application developers and development teams had to wait forever to get servers and periphery equipment physically installed and set-up for use. They had to order the resources, wait for them to ship, screw them into the racks, install operating systems and other software, and often paid a hefty price for the privilege. This process often took months.

Fast-forward to 2020 and compute resources come in several flavors: on-premise, virtual or bare-metal – and they can be made available for deployment in minutes with proper cloud automation.

At the same time, containerization of applications is quickly becoming mainstream – driven by the Kubernetes container orchestration and management system. So now, when a DevOps team builds an application, the platform and their software code can be deployed quickly to their resource(s) of choice – and accessed instantly in a compute cloud.

In this compute paradigm, the [virtual] server resources are no longer leading – the application containers are. Try to visualize it: a container is a virtualized slice of an operating system (OS) kernel. It contains an instance of the OS, the application – or a piece of it – and other necessary platform software drivers, etc.

The container supplants the server in deployment importance and prevalence – and is actually mounted (deployed) onto any generic compute resource available. Deploying containers to such hybrid cloud resources is referred to as a “cloud native” deployment.

Managing Microservices

Building a custom platform is one of the core use cases of the DevOps methodology – along with CI/CD (Continuous Integration/Continuous Deployment). However, many software applications are very complex. As a result, the build and deployment itself is often somewhat fragmented.

Traditionally, pre-containerization, developers might have been faced with how to deploy an application’s database separately from the application task manager and front-end portal on different [virtual] server resources. Before the proliferation of public clouds, this would be done on-premise, using various tools.

In the world of hybrid cloud, this type of deployment becomes even more complex. The need for efficient DevOps automation is even greater. For example, the developers may have developed and built a complex multi-tier platform that has several deployment interdepencies.

In the cloud native world, different types of containerized services that are a part of the same application are known as microservices.

A great way to orchestrate and manage microservices is using one of the popular DevOps Configuration Management (CM) tools. Kubernetes plays nicely with CM tools for container builds, cluster management and application lifecycle management, for example. Some of the more common CM tools include market leader Ansible, along with Puppet, Chef, IBM UrbanCode Deploy, and Microsoft PowerShell. It’s nice to have choices!

CloudController Is The ‘Ops’

InContinuum’s CloudController solution doesn’t care which CM tool you select. We’re agnostic of CM tools in the same way we’re agnostic of which on-premise or public cloud resource provider you have chosen. Our job is to be the ‘Ops’ in DevOps. We’re not involved in developing applications, managing the development of applications, nor managing the CI/CD framework and application lifecycle. But once that CI/CD framework is in place, CloudController orchestrates how the developed software deploys onto the resource of choice. We care about linking the software via process-driven workflows, allowing developers to send code to a deployment environment using the CM tool of choice.

All About Cloud Native

The industry itself is going through a massive digital transformation. And most of it involves rolling out containers onto public clouds. With the fast rate of these changes, we’ll all be talking much less about on-premise data centers in three to five years. Soon, it’s going to be all about cloud and cloud-native.

Smart companies who want agility and flexibility in their development environments are moving to cloud native – containerized deployments. These companies simply build an application container using a product such as Kubernetes, often in combination with CM tooling to orchestrate container deployments.

We’re Ready

CloudController is being fitted now with complete cloud native support. It’s a natural addition to our CMP, and will soon be ready to make DevOps a lot more streamlined. Stay posted for our announcement after the summer. By utilizing a hybrid cloud orchestration tool that handles all types of service rollouts and unifies them through one portal – DevOps can be done much more efficiently.